Spaces:

Running

Running

github-actions[bot]

commited on

Commit

·

ae15dbe

1

Parent(s):

1d0d685

🚀 Automated OCR deployment from GitHub Actions

Browse files- .gitattributes +3 -35

- =0.1.4 +55 -0

- README.md +69 -8

- app.py +186 -0

- images/card.jpg +3 -0

- images/demo.png +3 -0

- images/google.png +3 -0

- requirements.txt +0 -0

.gitattributes

CHANGED

|

@@ -1,35 +1,3 @@

|

|

| 1 |

-

|

| 2 |

-

|

| 3 |

-

|

| 4 |

-

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

-

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

| 6 |

-

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

-

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 8 |

-

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

-

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

-

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 11 |

-

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 12 |

-

*.model filter=lfs diff=lfs merge=lfs -text

|

| 13 |

-

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 14 |

-

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 15 |

-

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 16 |

-

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 17 |

-

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 18 |

-

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 19 |

-

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 20 |

-

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 21 |

-

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 22 |

-

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 23 |

-

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 24 |

-

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 25 |

-

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

-

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

-

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

-

*.tar filter=lfs diff=lfs merge=lfs -text

|

| 29 |

-

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 30 |

-

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 31 |

-

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 32 |

-

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

-

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

-

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

-

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

| 1 |

+

images/*.png filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

images/*.jpg filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

images/*.jpeg filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

=0.1.4

ADDED

|

@@ -0,0 +1,55 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Collecting huggingface-hub>=0.30

|

| 2 |

+

Downloading huggingface_hub-1.1.3-py3-none-any.whl.metadata (13 kB)

|

| 3 |

+

Collecting hf-transfer

|

| 4 |

+

Downloading hf_transfer-0.1.9-cp38-abi3-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.metadata (1.7 kB)

|

| 5 |

+

Requirement already satisfied: filelock in /opt/hostedtoolcache/Python/3.10.19/x64/lib/python3.10/site-packages (from huggingface-hub>=0.30) (3.20.0)

|

| 6 |

+

Requirement already satisfied: fsspec>=2023.5.0 in /opt/hostedtoolcache/Python/3.10.19/x64/lib/python3.10/site-packages (from huggingface-hub>=0.30) (2025.10.0)

|

| 7 |

+

Collecting hf-xet<2.0.0,>=1.2.0 (from huggingface-hub>=0.30)

|

| 8 |

+

Downloading hf_xet-1.2.0-cp37-abi3-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.metadata (4.9 kB)

|

| 9 |

+

Collecting httpx<1,>=0.23.0 (from huggingface-hub>=0.30)

|

| 10 |

+

Downloading httpx-0.28.1-py3-none-any.whl.metadata (7.1 kB)

|

| 11 |

+

Requirement already satisfied: packaging>=20.9 in /opt/hostedtoolcache/Python/3.10.19/x64/lib/python3.10/site-packages (from huggingface-hub>=0.30) (25.0)

|

| 12 |

+

Requirement already satisfied: pyyaml>=5.1 in /opt/hostedtoolcache/Python/3.10.19/x64/lib/python3.10/site-packages (from huggingface-hub>=0.30) (6.0.3)

|

| 13 |

+

Collecting shellingham (from huggingface-hub>=0.30)

|

| 14 |

+

Downloading shellingham-1.5.4-py2.py3-none-any.whl.metadata (3.5 kB)

|

| 15 |

+

Collecting tqdm>=4.42.1 (from huggingface-hub>=0.30)

|

| 16 |

+

Downloading tqdm-4.67.1-py3-none-any.whl.metadata (57 kB)

|

| 17 |

+

Collecting typer-slim (from huggingface-hub>=0.30)

|

| 18 |

+

Downloading typer_slim-0.20.0-py3-none-any.whl.metadata (16 kB)

|

| 19 |

+

Requirement already satisfied: typing-extensions>=3.7.4.3 in /opt/hostedtoolcache/Python/3.10.19/x64/lib/python3.10/site-packages (from huggingface-hub>=0.30) (4.15.0)

|

| 20 |

+

Collecting anyio (from httpx<1,>=0.23.0->huggingface-hub>=0.30)

|

| 21 |

+

Downloading anyio-4.11.0-py3-none-any.whl.metadata (4.1 kB)

|

| 22 |

+

Collecting certifi (from httpx<1,>=0.23.0->huggingface-hub>=0.30)

|

| 23 |

+

Downloading certifi-2025.11.12-py3-none-any.whl.metadata (2.5 kB)

|

| 24 |

+

Collecting httpcore==1.* (from httpx<1,>=0.23.0->huggingface-hub>=0.30)

|

| 25 |

+

Downloading httpcore-1.0.9-py3-none-any.whl.metadata (21 kB)

|

| 26 |

+

Collecting idna (from httpx<1,>=0.23.0->huggingface-hub>=0.30)

|

| 27 |

+

Downloading idna-3.11-py3-none-any.whl.metadata (8.4 kB)

|

| 28 |

+

Collecting h11>=0.16 (from httpcore==1.*->httpx<1,>=0.23.0->huggingface-hub>=0.30)

|

| 29 |

+

Downloading h11-0.16.0-py3-none-any.whl.metadata (8.3 kB)

|

| 30 |

+

Collecting exceptiongroup>=1.0.2 (from anyio->httpx<1,>=0.23.0->huggingface-hub>=0.30)

|

| 31 |

+

Downloading exceptiongroup-1.3.0-py3-none-any.whl.metadata (6.7 kB)

|

| 32 |

+

Collecting sniffio>=1.1 (from anyio->httpx<1,>=0.23.0->huggingface-hub>=0.30)

|

| 33 |

+

Downloading sniffio-1.3.1-py3-none-any.whl.metadata (3.9 kB)

|

| 34 |

+

Collecting click>=8.0.0 (from typer-slim->huggingface-hub>=0.30)

|

| 35 |

+

Downloading click-8.3.0-py3-none-any.whl.metadata (2.6 kB)

|

| 36 |

+

Downloading huggingface_hub-1.1.3-py3-none-any.whl (515 kB)

|

| 37 |

+

Downloading hf_xet-1.2.0-cp37-abi3-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (3.3 MB)

|

| 38 |

+

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 3.3/3.3 MB 164.6 MB/s 0:00:00

|

| 39 |

+

Downloading httpx-0.28.1-py3-none-any.whl (73 kB)

|

| 40 |

+

Downloading httpcore-1.0.9-py3-none-any.whl (78 kB)

|

| 41 |

+

Downloading hf_transfer-0.1.9-cp38-abi3-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (3.6 MB)

|

| 42 |

+

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 3.6/3.6 MB 615.8 MB/s 0:00:00

|

| 43 |

+

Downloading h11-0.16.0-py3-none-any.whl (37 kB)

|

| 44 |

+

Downloading tqdm-4.67.1-py3-none-any.whl (78 kB)

|

| 45 |

+

Downloading anyio-4.11.0-py3-none-any.whl (109 kB)

|

| 46 |

+

Downloading exceptiongroup-1.3.0-py3-none-any.whl (16 kB)

|

| 47 |

+

Downloading idna-3.11-py3-none-any.whl (71 kB)

|

| 48 |

+

Downloading sniffio-1.3.1-py3-none-any.whl (10 kB)

|

| 49 |

+

Downloading certifi-2025.11.12-py3-none-any.whl (159 kB)

|

| 50 |

+

Downloading shellingham-1.5.4-py2.py3-none-any.whl (9.8 kB)

|

| 51 |

+

Downloading typer_slim-0.20.0-py3-none-any.whl (47 kB)

|

| 52 |

+

Downloading click-8.3.0-py3-none-any.whl (107 kB)

|

| 53 |

+

Installing collected packages: tqdm, sniffio, shellingham, idna, hf-xet, hf-transfer, h11, exceptiongroup, click, certifi, typer-slim, httpcore, anyio, httpx, huggingface-hub

|

| 54 |

+

|

| 55 |

+

Successfully installed anyio-4.11.0 certifi-2025.11.12 click-8.3.0 exceptiongroup-1.3.0 h11-0.16.0 hf-transfer-0.1.9 hf-xet-1.2.0 httpcore-1.0.9 httpx-0.28.1 huggingface-hub-1.1.3 idna-3.11 shellingham-1.5.4 sniffio-1.3.1 tqdm-4.67.1 typer-slim-0.20.0

|

README.md

CHANGED

|

@@ -1,12 +1,73 @@

|

|

| 1 |

---

|

| 2 |

-

title: OCR

|

| 3 |

-

emoji:

|

| 4 |

-

colorFrom:

|

| 5 |

-

colorTo:

|

| 6 |

-

sdk: gradio

|

| 7 |

-

sdk_version:

|

| 8 |

-

app_file: app.py

|

| 9 |

pinned: false

|

| 10 |

---

|

| 11 |

|

| 12 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

+

title: "OCR Text Detection"

|

| 3 |

+

emoji: "📄"

|

| 4 |

+

colorFrom: "blue"

|

| 5 |

+

colorTo: "green"

|

| 6 |

+

sdk: "gradio"

|

| 7 |

+

sdk_version: "4.0.0"

|

| 8 |

+

app_file: "app.py"

|

| 9 |

pinned: false

|

| 10 |

---

|

| 11 |

|

| 12 |

+

# 📄 OCR Text Detection

|

| 13 |

+

|

| 14 |

+

A general Optical Character Recognition (OCR) application built with Gradio and EasyOCR for extracting text from images with high accuracy.

|

| 15 |

+

|

| 16 |

+

## Features

|

| 17 |

+

|

| 18 |

+

- **Multiple Upload Options**

|

| 19 |

+

- Upload images directly from your device

|

| 20 |

+

- Load images from URL

|

| 21 |

+

- Try demo images with one click

|

| 22 |

+

|

| 23 |

+

- **Smart Text Detection**

|

| 24 |

+

- Automatic text detection with bounding boxes

|

| 25 |

+

- Confidence scores for each detected text

|

| 26 |

+

- Adjustable confidence threshold filtering

|

| 27 |

+

|

| 28 |

+

- **Visual Annotations**

|

| 29 |

+

- Green bounding boxes around detected text

|

| 30 |

+

- Confidence scores displayed on the image

|

| 31 |

+

- Easy-to-read formatted text output

|

| 32 |

+

|

| 33 |

+

- **User-Friendly Interface**

|

| 34 |

+

- Clean and modern UI design

|

| 35 |

+

- Real-time processing

|

| 36 |

+

- Copy extracted text with one click

|

| 37 |

+

- Responsive layout

|

| 38 |

+

|

| 39 |

+

## How to Use

|

| 40 |

+

|

| 41 |

+

1. **Upload an Image**: Choose from three options:

|

| 42 |

+

- Click "Upload File" tab to upload from your device

|

| 43 |

+

- Click "Image by URL" tab to load from a web URL

|

| 44 |

+

- Click on any demo image to try it instantly

|

| 45 |

+

|

| 46 |

+

2. **Adjust Confidence**: Use the confidence threshold slider (0.0 - 1.0) to filter detections based on accuracy

|

| 47 |

+

|

| 48 |

+

3. **View Results**:

|

| 49 |

+

- See the processed image with bounding boxes

|

| 50 |

+

- Read the extracted text in the text box below

|

| 51 |

+

- Copy the text using the copy button

|

| 52 |

+

|

| 53 |

+

## Technology Stack

|

| 54 |

+

|

| 55 |

+

- **EasyOCR**: State-of-the-art OCR engine

|

| 56 |

+

- **Gradio**: Interactive web interface

|

| 57 |

+

- **OpenCV**: Image processing

|

| 58 |

+

- **NumPy**: Numerical operations

|

| 59 |

+

|

| 60 |

+

## Supported Image Formats

|

| 61 |

+

|

| 62 |

+

- JPG/JPEG

|

| 63 |

+

- PNG

|

| 64 |

+

- BMP

|

| 65 |

+

- TIFF/TIF

|

| 66 |

+

|

| 67 |

+

## 🌐 Made by

|

| 68 |

+

|

| 69 |

+

[Techtics.ai](https://techtics.ai) - AI Solutions for Business

|

| 70 |

+

|

| 71 |

+

---

|

| 72 |

+

|

| 73 |

+

*Extract text from invoices, receipts, documents, screenshots, and more!*

|

app.py

ADDED

|

@@ -0,0 +1,186 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

"""

|

| 2 |

+

OCR Text Detection App

|

| 3 |

+

======================

|

| 4 |

+

Gradio app for OCR text extraction

|

| 5 |

+

Features: File upload, URL upload, demo images, confidence filtering

|

| 6 |

+

"""

|

| 7 |

+

|

| 8 |

+

import cv2

|

| 9 |

+

import easyocr

|

| 10 |

+

import numpy as np

|

| 11 |

+

from pathlib import Path

|

| 12 |

+

import gradio as gr

|

| 13 |

+

import urllib.request

|

| 14 |

+

import tempfile

|

| 15 |

+

import os

|

| 16 |

+

import warnings

|

| 17 |

+

|

| 18 |

+

warnings.filterwarnings('ignore')

|

| 19 |

+

|

| 20 |

+

# Initialize EasyOCR reader once (reused for all images)

|

| 21 |

+

reader = easyocr.Reader(['en'], gpu=False, verbose=False)

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

def format_text_aligned(results):

|

| 25 |

+

"""Format OCR results by grouping text by Y-coordinate (lines) and sorting by X (left-to-right)."""

|

| 26 |

+

if not results:

|

| 27 |

+

return ""

|

| 28 |

+

|

| 29 |

+

# Extract Y-center and X-min for each detection

|

| 30 |

+

detections = [(sum(p[1] for p in bbox) / len(bbox), min(p[0] for p in bbox), text) for bbox, text, _ in results]

|

| 31 |

+

if not detections:

|

| 32 |

+

return ""

|

| 33 |

+

|

| 34 |

+

# Calculate threshold to group detections on same line (30% of avg line spacing)

|

| 35 |

+

y_coords = [d[0] for d in detections]

|

| 36 |

+

y_threshold = (max(y_coords) - min(y_coords)) / len(set(int(y) for y in y_coords)) * 0.3

|

| 37 |

+

|

| 38 |

+

# Sort by Y (top to bottom), then X (left to right)

|

| 39 |

+

detections.sort(key=lambda x: (x[0], x[1]))

|

| 40 |

+

lines, current_line, current_y = [], [], detections[0][0] if detections else 0

|

| 41 |

+

|

| 42 |

+

# Group detections by similar Y coordinates into lines

|

| 43 |

+

for y, x, text in detections:

|

| 44 |

+

if abs(y - current_y) <= y_threshold:

|

| 45 |

+

current_line.append((x, text))

|

| 46 |

+

else:

|

| 47 |

+

if current_line:

|

| 48 |

+

lines.append(' '.join([t[1] for t in sorted(current_line, key=lambda x: x[0])]))

|

| 49 |

+

current_line, current_y = [(x, text)], y

|

| 50 |

+

|

| 51 |

+

if current_line:

|

| 52 |

+

lines.append(' '.join([t[1] for t in sorted(current_line, key=lambda x: x[0])]))

|

| 53 |

+

|

| 54 |

+

return '\n'.join(lines)

|

| 55 |

+

|

| 56 |

+

|

| 57 |

+

def process_ocr(input_image, confidence_threshold=0.0):

|

| 58 |

+

"""Process image with OCR and return annotated image + formatted text."""

|

| 59 |

+

if input_image is None:

|

| 60 |

+

return None, ""

|

| 61 |

+

|

| 62 |

+

# Convert RGB to BGR for OpenCV

|

| 63 |

+

image_bgr = cv2.cvtColor(input_image, cv2.COLOR_RGB2BGR)

|

| 64 |

+

|

| 65 |

+

# Perform OCR

|

| 66 |

+

results = reader.readtext(image_bgr)

|

| 67 |

+

|

| 68 |

+

# Filter by confidence threshold

|

| 69 |

+

filtered_results = [(bbox, text, conf) for bbox, text, conf in results if conf >= confidence_threshold]

|

| 70 |

+

formatted_text = format_text_aligned(filtered_results)

|

| 71 |

+

|

| 72 |

+

# Draw bounding boxes and labels on image

|

| 73 |

+

annotated_image = image_bgr.copy()

|

| 74 |

+

for bbox, text, confidence in filtered_results:

|

| 75 |

+

# Draw bounding box polygon

|

| 76 |

+

bbox_points = np.array([[int(p[0]), int(p[1])] for p in bbox], dtype=np.int32)

|

| 77 |

+

cv2.polylines(annotated_image, [bbox_points], isClosed=True, color=(0, 255, 0), thickness=2)

|

| 78 |

+

|

| 79 |

+

# Calculate position for text label

|

| 80 |

+

x_min, y_min = int(min(p[0] for p in bbox)), int(min(p[1] for p in bbox))

|

| 81 |

+

label = f"{text} ({confidence:.2f})"

|

| 82 |

+

(w, h), _ = cv2.getTextSize(label, cv2.FONT_HERSHEY_SIMPLEX, 0.4, 1)

|

| 83 |

+

|

| 84 |

+

# Position text above or below box based on Y position

|

| 85 |

+

text_y = y_min - 5 if y_min > 20 else y_min + 20

|

| 86 |

+

|

| 87 |

+

# Draw background rectangle and text

|

| 88 |

+

cv2.rectangle(annotated_image, (x_min - 2, text_y - h - 2), (x_min + w + 2, text_y + 2), (0, 255, 0), -1)

|

| 89 |

+

cv2.putText(annotated_image, label, (x_min, text_y), cv2.FONT_HERSHEY_SIMPLEX, 0.4, (0, 0, 0), 1)

|

| 90 |

+

|

| 91 |

+

return cv2.cvtColor(annotated_image, cv2.COLOR_BGR2RGB), formatted_text or ""

|

| 92 |

+

|

| 93 |

+

|

| 94 |

+

# Load sample images for demo gallery

|

| 95 |

+

exts = ('.jpg', '.jpeg', '.png', '.bmp', '.tiff', '.tif')

|

| 96 |

+

sample_images = sorted([str(f) for f in Path('images').iterdir() if f.suffix.lower() in exts])[:3]

|

| 97 |

+

|

| 98 |

+

# CSS for professional styling

|

| 99 |

+

css = """

|

| 100 |

+

.gradio-container {font-family: 'Segoe UI', sans-serif; max-width: 1400px; margin: 0 auto; overflow-x: hidden;}

|

| 101 |

+

body, html {overflow-x: hidden; scrollbar-width: none;}

|

| 102 |

+

::-webkit-scrollbar {display: none;}

|

| 103 |

+

h1 {text-align: center; color: #042AFF; margin-bottom: 1rem; font-size: 2.5rem; font-weight: bold; letter-spacing: -0.5px;}

|

| 104 |

+

.description {text-align: center; color: #6b7280; margin-bottom: 0.3rem; font-size: 1.05rem; line-height: 1.6;}

|

| 105 |

+

.credits {text-align: center; color: #f2faf4; margin-bottom: 2rem; margin-top: 0; font-size: 1rem;}

|

| 106 |

+

.credits a {color: #042AFF; text-decoration: none; font-weight: bold; transition: color 0.3s ease;}

|

| 107 |

+

.credits a:hover {color: #111F68; text-decoration: underline;}

|

| 108 |

+

"""

|

| 109 |

+

|

| 110 |

+

# Create Gradio interface

|

| 111 |

+

with gr.Blocks(title="OCR Text Detection", theme=gr.themes.Soft(), css=css) as demo:

|

| 112 |

+

gr.Markdown("# 📄 OCR Text Detection")

|

| 113 |

+

gr.Markdown("<div class='description'>Extract text from images with bounding boxes and confidence scores. Upload an image or select a demo image to get started.</div>", elem_classes=["description"])

|

| 114 |

+

gr.Markdown("<div class='credits' style='text-align: center;'>Made by <a href='https://techtics.ai' target='_blank' style='color: #042AFF; text-decoration: none; font-weight: bold;'>Techtics.ai</a></div>", elem_classes=["credits"])

|

| 115 |

+

|

| 116 |

+

# Main layout: Two columns

|

| 117 |

+

with gr.Row():

|

| 118 |

+

# Column 1: Upload area with tabs

|

| 119 |

+

with gr.Column(scale=1):

|

| 120 |

+

with gr.Tabs():

|

| 121 |

+

with gr.Tab("Upload File"):

|

| 122 |

+

image_input = gr.Image(label="Upload Image", type="numpy", height=400)

|

| 123 |

+

with gr.Tab("Image by URL"):

|

| 124 |

+

url_input = gr.Textbox(label="Image URL", placeholder="Enter image URL (jpg, png, etc.)", lines=1)

|

| 125 |

+

url_btn = gr.Button("Load Image from URL", variant="primary")

|

| 126 |

+

|

| 127 |

+

# Demo images gallery

|

| 128 |

+

if sample_images:

|

| 129 |

+

gr.Markdown("### Demo Images (Click to load)")

|

| 130 |

+

demo_gallery = gr.Gallery(value=sample_images, columns=3, rows=1, height="40px", show_label=False, container=False, allow_preview=False, object_fit="contain")

|

| 131 |

+

|

| 132 |

+

# Column 2: Processed image and confidence slider

|

| 133 |

+

with gr.Column(scale=1):

|

| 134 |

+

gr.Markdown("### Processed Image")

|

| 135 |

+

annotated_output = gr.Image(label="", type="numpy", height=400, visible=True)

|

| 136 |

+

confidence_slider = gr.Slider(minimum=0.0, maximum=1.0, value=0.3, step=0.05, label="Confidence Threshold", info="Filter detections by minimum confidence score")

|

| 137 |

+

|

| 138 |

+

# Text output below both columns (full width, hidden until processing)

|

| 139 |

+

text_output = gr.Textbox(label="Extracted Text", value="", placeholder="Extracted text will appear here after processing...", lines=12, interactive=True, show_copy_button=True, visible=False)

|

| 140 |

+

|

| 141 |

+

# Load image from URL

|

| 142 |

+

def load_from_url(url):

|

| 143 |

+

"""Download and load image from URL."""

|

| 144 |

+

if not url or not url.strip():

|

| 145 |

+

return None

|

| 146 |

+

try:

|

| 147 |

+

req = urllib.request.Request(url.strip(), headers={'User-Agent': 'Mozilla/5.0'})

|

| 148 |

+

with urllib.request.urlopen(req, timeout=10) as response:

|

| 149 |

+

with tempfile.NamedTemporaryFile(delete=False, suffix='.jpg') as tmp_file:

|

| 150 |

+

tmp_file.write(response.read())

|

| 151 |

+

tmp_path = tmp_file.name

|

| 152 |

+

img = cv2.imread(tmp_path)

|

| 153 |

+

os.unlink(tmp_path)

|

| 154 |

+

return cv2.cvtColor(img, cv2.COLOR_BGR2RGB) if img is not None else None

|

| 155 |

+

except Exception:

|

| 156 |

+

return None

|

| 157 |

+

|

| 158 |

+

# Load demo image from gallery

|

| 159 |

+

def load_from_gallery(evt: gr.SelectData):

|

| 160 |

+

"""Load demo image when clicked."""

|

| 161 |

+

if evt.index < len(sample_images):

|

| 162 |

+

img = cv2.imread(sample_images[evt.index])

|

| 163 |

+

return cv2.cvtColor(img, cv2.COLOR_BGR2RGB) if img is not None else None

|

| 164 |

+

return None

|

| 165 |

+

|

| 166 |

+

# Event handlers

|

| 167 |

+

url_btn.click(fn=load_from_url, inputs=url_input, outputs=image_input)

|

| 168 |

+

url_input.submit(fn=load_from_url, inputs=url_input, outputs=image_input)

|

| 169 |

+

if sample_images:

|

| 170 |

+

demo_gallery.select(fn=load_from_gallery, outputs=image_input)

|

| 171 |

+

|

| 172 |

+

# Process image when it changes or confidence slider changes

|

| 173 |

+

def on_change(img, conf_thresh):

|

| 174 |

+

"""Process image and update annotated image + text output."""

|

| 175 |

+

if img is None:

|

| 176 |

+

return gr.update(visible=True, value=None), gr.update(visible=False, value="")

|

| 177 |

+

annot, text = process_ocr(img, conf_thresh)

|

| 178 |

+

return gr.update(visible=True, value=annot), gr.update(visible=True, value=text or "")

|

| 179 |

+

|

| 180 |

+

image_input.change(fn=on_change, inputs=[image_input, confidence_slider], outputs=[annotated_output, text_output])

|

| 181 |

+

confidence_slider.change(fn=on_change, inputs=[image_input, confidence_slider], outputs=[annotated_output, text_output])

|

| 182 |

+

|

| 183 |

+

|

| 184 |

+

if __name__ == "__main__":

|

| 185 |

+

demo.launch(share=True, server_name="0.0.0.0", server_port=7860)

|

| 186 |

+

# http://localhost:7860/ to access the app

|

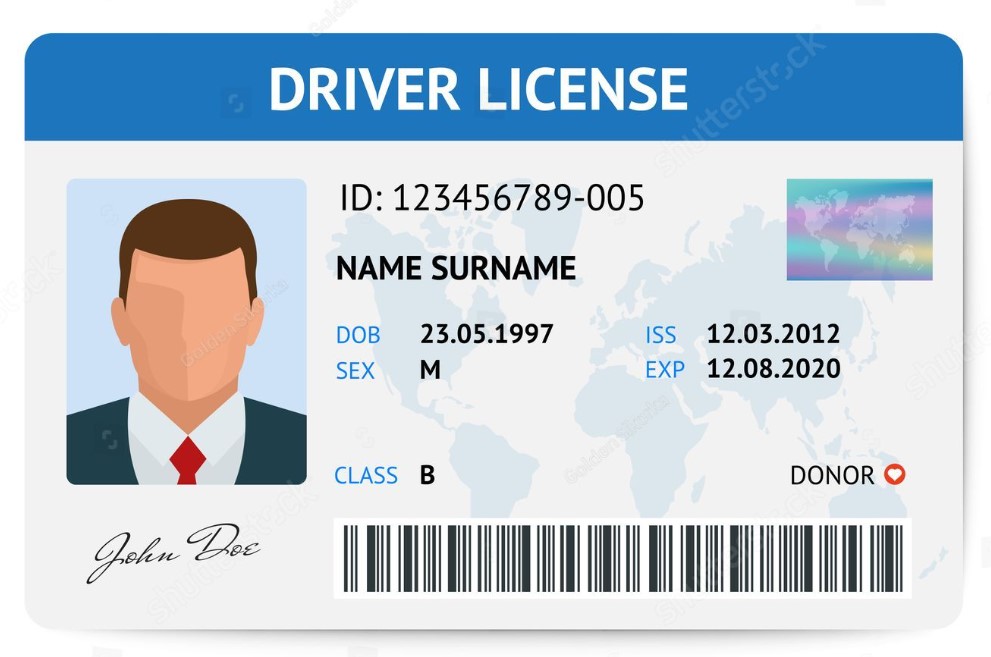

images/card.jpg

ADDED

|

Git LFS Details

|

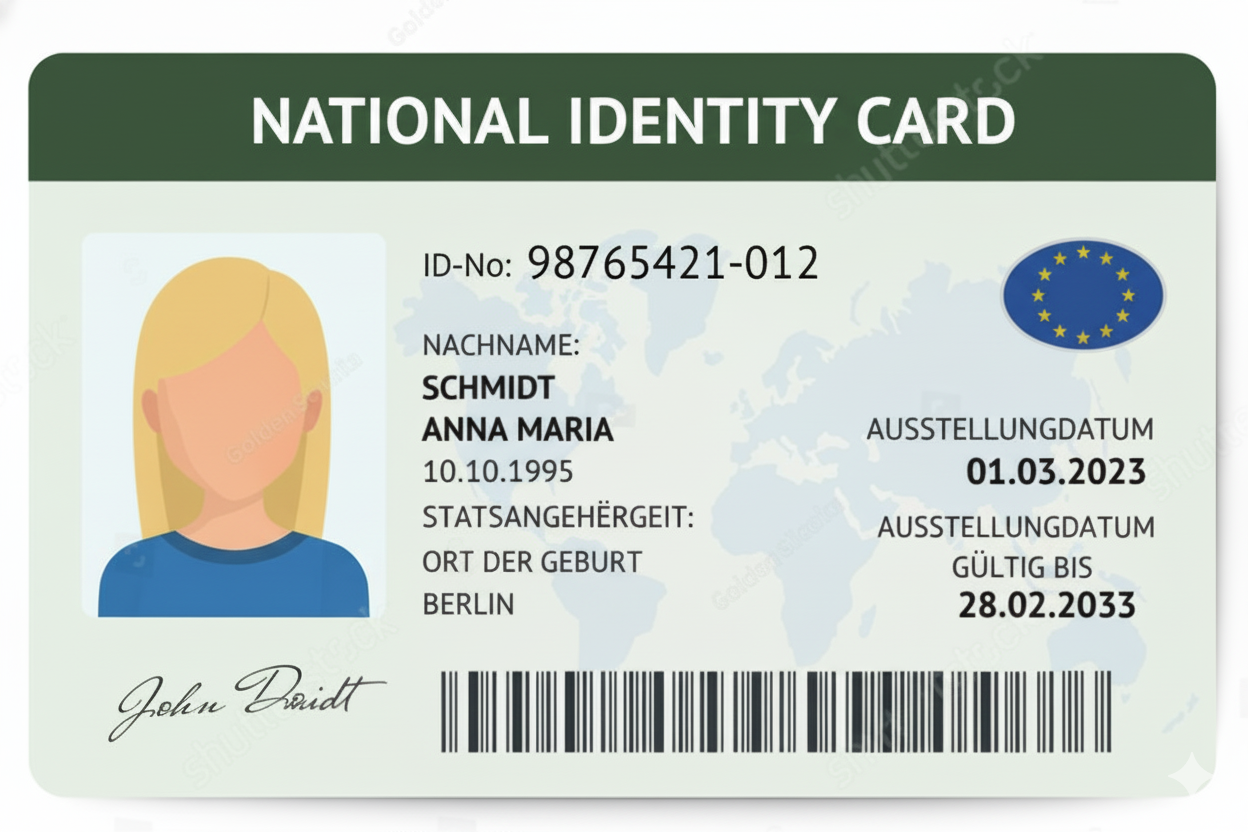

images/demo.png

ADDED

|

Git LFS Details

|

images/google.png

ADDED

|

Git LFS Details

|

requirements.txt

ADDED

|

Binary file (64 Bytes). View file

|

|

|