Empathic-Insight-Voice-Plus

Empathic-Insight-Voice-Plus extends laion/Empathic-Insight-Voice-Small with 4 additional audio quality expert models. These new experts predict overall audio quality, speech quality, background noise quality, and content enjoyment from the same frozen laion/BUD-E-Whisper encoder embeddings.

This repository is fully compatible with the original Empathic-Insight-Voice-Small suite. It uses the same Whisper encoder (based on OpenAI Whisper Small) and the same MLP hidden architecture (proj=64, hidden=[64, 32, 16]). The only difference is that the new quality experts use pooled features (mean + min + max + std pooling over the encoder sequence dimension, yielding a 3072-d input) instead of the full flattened sequence, making them significantly more compact (~200K parameters each vs. ~73.7M for the full-sequence emotion experts).

This work is based on the research paper: "EMONET-VOICE: A Fine-Grained, Expert-Verified Benchmark for Speech Emotion Detection"

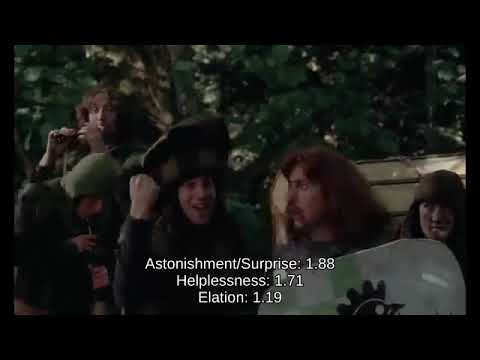

Example Video Analyses (Top 3 Emotions)

Model Description

The Empathic-Insight-Voice-Plus suite combines the original 54+ emotion/attribute MLP experts from Empathic-Insight-Voice-Small with 4 new audio quality regression experts. All models use embeddings from the fine-tuned Whisper model laion/BUD-E-Whisper.

The original emotion experts were trained on the large-scale, multilingual synthetic voice-acting dataset LAION'S GOT TALENT (5,000 hours) & an "in the wild" dataset of voice snippets (5,000 hours). The new quality experts were trained on the mitermix/balanced-audio-score-datasets dataset.

The quality scores are distilled from two established audio quality models:

- Overall Quality, Speech Quality, Background Quality: Distilled from Microsoft's DNSMOS (Deep Noise Suppression Mean Opinion Score), a non-intrusive speech quality estimator.

- Content Enjoyment: Distilled from Meta's AudioBox content enjoyment scoring model.

New Quality Expert Scores

| Expert | Description | Source | Expected Range | Typical Mean | Unit |

|---|---|---|---|---|---|

| Overall Quality | Overall perceived audio quality score. Higher is better. | DNSMOS | 1.0 - 3.7 | ~2.4 | MOS-like |

| Speech Quality | Quality of the speech signal itself (clarity, naturalness). Higher is better. | DNSMOS | 1.0 - 2.4 | ~1.9 | MOS-like |

| Background Quality | Quality of the background (absence of noise/artifacts). Higher is better. | DNSMOS | 1.0 - 4.3 | ~3.2 | MOS-like |

| Content Enjoyment | How engaging/enjoyable the spoken content is. Higher is better. | Meta AudioBox | 1.9 - 5.1 | ~4.1 | MOS-like |

Validation Performance

| Expert | Val MAE | Pearson r | Val Samples |

|---|---|---|---|

| Overall Quality | 0.26 | 0.899 | 200 |

| Speech Quality | 0.30 | 0.517 | 200 |

| Background Quality | 0.35 | 0.865 | 200 |

| Content Enjoyment | 0.34 | 0.691 | 200 |

How to Use

Full Inference: Emotion Scores + Quality Scores

The following example loads both the original 54 emotion/attribute experts and the 4 new quality experts, then runs inference on a single audio file.

import torch

import torch.nn as nn

import numpy as np

import librosa

from pathlib import Path

from transformers import WhisperModel, WhisperFeatureExtractor

from huggingface_hub import snapshot_download

import gc

# --- Configuration ---

SAMPLING_RATE = 16000

MAX_AUDIO_SECONDS = 30.0

WHISPER_MODEL_ID = "laion/BUD-E-Whisper"

# Original emotion experts

HF_EMOTION_REPO_ID = "laion/Empathic-Insight-Voice-Small"

# New quality experts (this repo)

HF_QUALITY_REPO_ID = "laion/Empathic-Insight-Voice-Plus"

WHISPER_SEQ_LEN = 1500

WHISPER_EMBED_DIM = 768

PROJECTION_DIM = 64

MLP_HIDDEN_DIMS = [64, 32, 16]

MLP_DROPOUTS = [0.0, 0.1, 0.1, 0.1]

POOLED_DIM = 3072 # 4 * 768 (mean + min + max + std)

DEVICE = torch.device("cuda" if torch.cuda.is_available() else "cpu")

# --- Model Definitions ---

class FullEmbeddingMLP(nn.Module):

"""Original emotion expert architecture (full sequence input)."""

def __init__(self, seq_len, embed_dim, projection_dim, mlp_hidden_dims, mlp_dropout_rates):

super().__init__()

self.flatten = nn.Flatten()

self.proj = nn.Linear(seq_len * embed_dim, projection_dim)

layers = [nn.ReLU(), nn.Dropout(mlp_dropout_rates[0])]

current_dim = projection_dim

for i, h_dim in enumerate(mlp_hidden_dims):

layers.extend([nn.Linear(current_dim, h_dim), nn.ReLU(), nn.Dropout(mlp_dropout_rates[i + 1])])

current_dim = h_dim

layers.append(nn.Linear(current_dim, 1))

self.mlp = nn.Sequential(*layers)

def forward(self, x):

if x.ndim == 4 and x.shape[1] == 1:

x = x.squeeze(1)

return self.mlp(self.proj(self.flatten(x)))

class PooledEmbeddingMLP(nn.Module):

"""New quality expert architecture (pooled features input)."""

def __init__(self, input_dim, projection_dim, mlp_hidden_dims, mlp_dropout_rates):

super().__init__()

self.proj = nn.Linear(input_dim, projection_dim)

layers = [nn.ReLU(), nn.Dropout(mlp_dropout_rates[0])]

current_dim = projection_dim

for i, h_dim in enumerate(mlp_hidden_dims):

layers.extend([nn.Linear(current_dim, h_dim), nn.ReLU(), nn.Dropout(mlp_dropout_rates[i + 1])])

current_dim = h_dim

layers.append(nn.Linear(current_dim, 1))

self.mlp = nn.Sequential(*layers)

def forward(self, x):

return self.mlp(self.proj(x))

def pool_embedding(embedding):

"""Pool encoder output [1, seq_len, 768] -> [1, 3072] using mean+min+max+std."""

mean_pool = embedding.mean(dim=1)

min_pool = embedding.min(dim=1).values

max_pool = embedding.max(dim=1).values

std_pool = embedding.std(dim=1)

return torch.cat([mean_pool, min_pool, max_pool, std_pool], dim=1)

# Quality expert file mapping

QUALITY_EXPERTS = {

"Overall_Quality": "model_score_overall_quality_best.pth",

"Speech_Quality": "model_score_speech_quality_best.pth",

"Background_Quality": "model_score_background_quality_best.pth",

"Content_Enjoyment": "model_score_content_enjoyment_best.pth",

}

@torch.no_grad()

def analyze_audio(audio_path: str):

"""Run full inference: emotions + quality scores."""

# Load Whisper encoder

print("Loading Whisper encoder...")

feature_extractor = WhisperFeatureExtractor.from_pretrained(WHISPER_MODEL_ID)

whisper = WhisperModel.from_pretrained(WHISPER_MODEL_ID, low_cpu_mem_usage=True)

encoder = whisper.get_encoder().to(DEVICE).eval()

del whisper; gc.collect()

# Load audio

print(f"Loading audio: {audio_path}")

waveform, sr = librosa.load(audio_path, sr=SAMPLING_RATE, mono=True)

max_samples = int(MAX_AUDIO_SECONDS * SAMPLING_RATE)

if len(waveform) > max_samples:

waveform = waveform[:max_samples]

print(f" Duration: {len(waveform)/SAMPLING_RATE:.2f}s")

# Get encoder embedding

inputs = feature_extractor(waveform, sampling_rate=SAMPLING_RATE, return_tensors="pt")

input_features = inputs.input_features.to(DEVICE)

embedding = encoder(input_features).last_hidden_state # [1, 1500, 768]

# Prepare pooled features for quality experts

pooled = pool_embedding(embedding.float()) # [1, 3072]

# Download model repos

emotion_dir = Path(snapshot_download(HF_EMOTION_REPO_ID, allow_patterns=["*.pth"]))

quality_dir = Path(snapshot_download(HF_QUALITY_REPO_ID, allow_patterns=["*.pth"]))

results = {}

# --- Run emotion experts (full sequence) ---

print("\n--- Emotion Scores ---")

embedding_cpu = embedding.cpu().float()

for pth_file in sorted(emotion_dir.glob("model_*_best.pth")):

name = pth_file.stem.replace("model_", "").replace("_best", "")

model = FullEmbeddingMLP(WHISPER_SEQ_LEN, WHISPER_EMBED_DIM, PROJECTION_DIM, MLP_HIDDEN_DIMS, MLP_DROPOUTS)

state_dict = torch.load(pth_file, map_location="cpu", weights_only=True)

if any(k.startswith("_orig_mod.") for k in state_dict):

state_dict = {k.replace("_orig_mod.", ""): v for k, v in state_dict.items()}

model.load_state_dict(state_dict)

model.eval()

score = model(embedding_cpu).item()

results[name] = score

del model; gc.collect()

# Print top emotions

sorted_emotions = sorted(results.items(), key=lambda x: x[1], reverse=True)

for name, score in sorted_emotions[:5]:

print(f" {name}: {score:.4f}")

# --- Run quality experts (pooled features) ---

print("\n--- Quality Scores ---")

pooled_cpu = pooled.cpu().float()

for label, filename in QUALITY_EXPERTS.items():

pth_path = quality_dir / filename

if not pth_path.exists():

print(f" {label}: model not found")

continue

model = PooledEmbeddingMLP(POOLED_DIM, PROJECTION_DIM, MLP_HIDDEN_DIMS, MLP_DROPOUTS)

model.load_state_dict(torch.load(pth_path, map_location="cpu", weights_only=True))

model.eval()

score = model(pooled_cpu).item()

results[label] = score

print(f" {label}: {score:.4f}")

del model; gc.collect()

return results

# --- Example Usage ---

# results = analyze_audio("your_audio_file.mp3")

Quality Scores Only (Lightweight)

If you only need the 4 quality scores without the emotion experts:

import torch

import torch.nn as nn

import numpy as np

import librosa

from transformers import WhisperModel, WhisperFeatureExtractor

from huggingface_hub import hf_hub_download

DEVICE = torch.device("cuda" if torch.cuda.is_available() else "cpu")

class PooledEmbeddingMLP(nn.Module):

def __init__(self, input_dim=3072, projection_dim=64,

mlp_hidden_dims=[64, 32, 16], mlp_dropout_rates=[0.0, 0.1, 0.1, 0.1]):

super().__init__()

self.proj = nn.Linear(input_dim, projection_dim)

layers = [nn.ReLU(), nn.Dropout(mlp_dropout_rates[0])]

current_dim = projection_dim

for i, h_dim in enumerate(mlp_hidden_dims):

layers.extend([nn.Linear(current_dim, h_dim), nn.ReLU(), nn.Dropout(mlp_dropout_rates[i + 1])])

current_dim = h_dim

layers.append(nn.Linear(current_dim, 1))

self.mlp = nn.Sequential(*layers)

def forward(self, x):

return self.mlp(self.proj(x))

# Load encoder

feature_extractor = WhisperFeatureExtractor.from_pretrained("laion/BUD-E-Whisper")

whisper = WhisperModel.from_pretrained("laion/BUD-E-Whisper", low_cpu_mem_usage=True)

encoder = whisper.get_encoder().to(DEVICE).eval()

# Load audio

waveform, sr = librosa.load("your_audio.mp3", sr=16000, mono=True)

inputs = feature_extractor(waveform, sampling_rate=16000, return_tensors="pt")

with torch.no_grad():

embedding = encoder(inputs.input_features.to(DEVICE)).last_hidden_state # [1, 1500, 768]

# Pool: mean + min + max + std

emb = embedding.float()

pooled = torch.cat([emb.mean(1), emb.min(1).values, emb.max(1).values, emb.std(1)], dim=1) # [1, 3072]

# Run quality experts

EXPERTS = {

"Overall_Quality": "model_score_overall_quality_best.pth",

"Speech_Quality": "model_score_speech_quality_best.pth",

"Background_Quality": "model_score_background_quality_best.pth",

"Content_Enjoyment": "model_score_content_enjoyment_best.pth",

}

for label, filename in EXPERTS.items():

path = hf_hub_download("laion/Empathic-Insight-Voice-Plus", filename)

model = PooledEmbeddingMLP()

model.load_state_dict(torch.load(path, map_location="cpu", weights_only=True))

model.eval()

with torch.no_grad():

score = model(pooled.cpu()).item()

print(f"{label}: {score:.4f}")

Architecture

The quality experts use a PooledEmbeddingMLP architecture:

Input: [batch, 3072] (4 * 768: mean + min + max + std pooling over Whisper encoder sequence)

-> Linear(3072, 64) -> ReLU -> Dropout(0.0)

-> Linear(64, 64) -> ReLU -> Dropout(0.1)

-> Linear(64, 32) -> ReLU -> Dropout(0.1)

-> Linear(32, 16) -> ReLU -> Dropout(0.1)

-> Linear(16, 1)

Output: scalar score

~203K parameters per expert. Trained with Huber loss (delta=1.0), AdamW optimizer (lr=1e-3, weight_decay=1e-4), cosine annealing LR schedule over 50 epochs.

Training Data

Trained on mitermix/balanced-audio-score-datasets (32.2 GB), which contains balanced distributions of audio quality scores across 5 dimensions. Each subset contains paired audio files and JSON metadata with ground truth scores.

| Subset | Source | Training Samples |

|---|---|---|

| Overall Quality | DNSMOS | 39,800 |

| Speech Quality | DNSMOS | 9,800 |

| Background Quality | DNSMOS | 39,800 |

| Content Enjoyment | Meta AudioBox | 19,800 |

Based On

- Whisper Encoder: laion/BUD-E-Whisper (OpenAI Whisper Small, fine-tuned)

- MLP Architecture: Same hidden layer structure as laion/Empathic-Insight-Voice-Small

- Emotion Experts: Fully compatible with the 54 emotion/attribute experts from Empathic-Insight-Voice-Small

Files

New quality experts (this repo):

model_score_overall_quality_best.pth- Overall Quality expert (DNSMOS)model_score_speech_quality_best.pth- Speech Quality expert (DNSMOS)model_score_background_quality_best.pth- Background Quality expert (DNSMOS)model_score_content_enjoyment_best.pth- Content Enjoyment expert (Meta AudioBox)

Original emotion experts are loaded from laion/Empathic-Insight-Voice-Small (54 .pth files).

Emotion Taxonomy

The core 40 emotion categories are (from EMONET-VOICE, Appendix A.1): Affection, Amusement, Anger, Astonishment/Surprise, Awe, Bitterness, Concentration, Confusion, Contemplation, Contempt, Contentment, Disappointment, Disgust, Distress, Doubt, Elation, Embarrassment, Emotional Numbness, Fatigue/Exhaustion, Fear, Helplessness, Hope/Enthusiasm/Optimism, Impatience and Irritability, Infatuation, Interest, Intoxication/Altered States of Consciousness, Jealousy & Envy, Longing, Malevolence/Malice, Pain, Pleasure/Ecstasy, Pride, Relief, Sadness, Sexual Lust, Shame, Sourness, Teasing, Thankfulness/Gratitude, Triumph.

Additional vocal attributes (e.g., Valence, Arousal, Gender, Age, Pitch characteristics) are also predicted by corresponding MLP models in the suite. The full list of predictable dimensions can be inferred from the FILENAME_PART_TO_TARGET_KEY_MAP in the Colab notebook.

Intended Use

These models are intended for research purposes in affective computing, speech emotion recognition (SER), human-AI interaction, and voice AI development. They can be used to:

- Analyze and predict fine-grained emotional states and vocal attributes from speech.

- Assess audio quality, speech clarity, background noise levels, and content enjoyment.

- Serve as a baseline for developing more advanced SER and audio quality assessment systems.

Out-of-Scope Use: These models are trained on synthetic speech and their generalization to spontaneous real-world speech needs further evaluation. They should not be used for making critical decisions about individuals, for surveillance, or in any manner that could lead to discriminatory outcomes or infringe on privacy without due diligence and ethical review.

Ethical Considerations

The EMONET-VOICE suite was developed with ethical considerations as a priority:

Privacy Preservation: The use of synthetic voice generation fundamentally circumvents privacy concerns associated with collecting real human emotional expressions, especially for sensitive states.

Responsible Use: These models are released for research. Users are urged to consider the ethical implications of their applications and avoid misuse, such as for emotional manipulation, surveillance, or in ways that could lead to unfair, biased, or harmful outcomes. The broader societal implications and mitigation of potential misuse of SER technology remain important ongoing considerations.